A reaserch paper exploring FIU students preference for AI or more traditional tutoring methods. Survey answers were taken from real FIU students whose identys will remain annonymous.

Introduction

Publishing, engineering, medicine, even computer sciences, what do all of these disciplines have in common? Each and every one of them requires some level of writing. Whether it's reports, proposals, or even just simple emails, writing can be found in almost every profession imaginable. As such, students must use their time in school to hone their writing abilities in order to be prepared for the professional world. That is where the role of a writing tutor comes in. Traditionally institutions like universities would provide students with writing tutors for free via centers much like FIU’s own writing center, or students would seek out private services for a fee. However, as tools like AI have continued to improve students are turning to AI tutors in order to obtain almost instantaneous feedback on their work, which begs the question, will AI tutoring become the new go to for students? If so, what does that mean for the future of traditional writing tutors?

As an English major, and someone who wants to go into the world of publishing as an editor, the continuous advancement of AI is constantly looming in the back of my mind. The threat of automation is nothing new to our society. Throughout the decades we’ve seen entire professions wiped out due to technological advancement. Phone operators, human computers, and many factory positions like sorting and assembly have disappeared thanks to our advancement, and many jobs like cashiering and administrative assistant type roles are predicted to decline as well. It makes sense, AI is meant to do these tasks quicker and more efficiently than the average human, and with far less errors making it especially alluring to students with tight schedules and budgets like myself. However, in this automated world some are beginning to understand the value of quality, especially when it comes to learning, so in this essay we will explore which mode of learning students here at FIU and around the world prefer, and maybe find a way to counter .

Litterature Review

The modality of learning has been a question on many people's minds, though AI tutoring is relatively new, there have already been a number of studies conducted analyzing not just student preferences for AI and traditional tutoring but also regarding the overall quality and efficiency of these two tools. Starting off with looking into student preference, according to International Journal of Educational Technology in Higher Education By Pack & Berrick (2023), British Journal of Educational Technology by Kashea (2025), and Computers Education Open by Lou Wong & Chan (2025) students showed a clear preference for human tutors over AI algorithms in these studies. In some cases approximately 87.2% of student participants in a study preferred human feedback, even after engaging with AI tutors on their paper ( Le,, et al. 19). Students often cite reasons like improving both written and spoken skills, higher levels of engagement, and ability to ask followup questions as reasons in which they much preferred human tutoring over AI( Escalante, et al. 13). This high percentage showing preference towards human tutors may also be an indicator of a concept known as “AI aversion” in which there already exists these negative notions of AI which influence your openness to engaging with AI tools (Le, Huixiao, et al, 2) . However this doesn’t mean that students' views on AI were entirely negative. Many students praised AI feedback for its clarity, consistency, speed, and specificity (Escalante, et al.13) and though many opted for human tutors many other students across the studies also expressed the desire for AI-human collaboration feeling that the use of both may compensate for the others weakness.

Even though students' perception of AI and human tutors are important factors to consider in the talks of possibly implementing AI into the educational systems we must consider whether students themselves understand the value of feedback in their work. In Rafael A. Calvo and Robert A. Ellis’s article “Students’ Conceptions of Tutor and Automated Feedback in Professional Writing,” a study much similar to the previous studies mentioned,asked students how they viewed feedback itself. The study found that students who regarded feedback as cohesive (feedback is viewed as a way to deepen understanding and improve both ideas and communication), found greater value in both tutoring modes than those who viewed feedback as fragmented (feedback is just a set of instructions or corrections used to complete a task, aka the paper (Calvo & Ellis, 5). Meaning that regardless of the source of the feedback students could still find a way to implement it into their writing in order to make improvements as they were more open to properly using feedback. This adds another layer that we must consider when looking at not just the future of AI in schools, but the future of writing tutoring.

Though students are showing a greater preference towards human assistance this doesn’t mean that AI is not a tool capable of improving students academic performance in writing. In a majority of studies conducted by Artificial Intelligence in Education (2025) , Learning and instruction (2024), as well as the studies mentioned earlier, many forms of AI are capable of giving a solid foundation of feedback in which students may build off of in order to improve their writing. In an early 2010 study into the issue students “[v]iew automated and human feedback as fulfilling the same need.” (Calvo & Ellis, 11). AI also has the unique advantage of saving educators time as well as keeping students engaged for longer periods of time ( Grung et al., 7). AI also provides great promise in terms of clarity, accessibility, speed and consistency, allowing students to still access good quality feedback even when tutors or teachers are unavailable (Escalante, et al., 13). So, to rule out AI’s use in the classroom entirely could be more of a disservice to the advancement of education. With this being said, to then take the opposite extreme and replace human tutors with AI entirely would be an even greater disadvantage to students as they then lose out on the human connection and motivation with material ( Gurung et al. 8). Many studies suggest a joint approach with teachers utilizing AI as another tool in their classroom with one study showing, “ On average, students in the human-AI group progressed at a significantly higher rate of 0.2 grade levels per month” ( Gurung, et al.,, 8).

Method

The aim of this particular study is to understand students' thoughts on AI generated feedback (whether they find the feedback given to be substantial enough to help their writing process) and to determine which method of tutoring students generally prefer. In order to gauge students' feelings, a survey was created and sent out to various FIU undergraduate students across five writing centered classrooms, which you can find in Appendix A. Students within these classrooms were from a variety of different majors, not just English. Students were sent these surveys via email and were under no obligation to complete them, nor were they offered any extra incentives such as extra credit. The results of the survey were then tallied up in order to create a clearer picture of student preferences and opinions.

Sample

Across the five classes a total of nineteen students participated in the survey, a majority of whom were either juniors or seniors, meaning that they have been at FIU long enough to at least be aware of the writing center. Students who participated were from a variety of different majors like political science, Psychology, and criminal sciences, though again a majority came from English focused majors like creative writing and literature.

Results

Of the students that participated 68.4% claimed to have used the assistance of a (human) writing tutor either through FIU or by other means, with 31.6% stating that they saw a writing tutor regularly and 36.8% stating that they have only used a writing tutor once. Meanwhile a similar number of students (68.4%) stated that they used AI tools like Chat GPT, Copilot, Gemini, and Grammarly to provide feedback or edit their papers. However a staggering 52.6% used AI tools regularly while only 15.8% or 3 people, used it once.

Percentage of students who have used the services of human tutors

Persentage of students who have used Ai tutors

Despite a large majority of participants using AI tools regularly the second half of the survey revealed some shocking results. When asked whether they thought a particular mode of tutoring had provided the student with quality feedback in which to improve their paper, students showed clear preference towards writing tutors, with 73.7% agreeing that writing tutors provided quality feedback. Alternatively only 21.1% agreed that AI tools had provided them with quality feedback while the other 78.9% either stayed neutral or disagreed entirely. One student claimed “AI is mostly frustrating to work with. Sometimes I like to give it my work and ask for its opinion. However, it will largely tell you that your work is good even when it isn't. It doesn't critically think so it can't break down any issue or possible improvements.” (Student Participant #1)

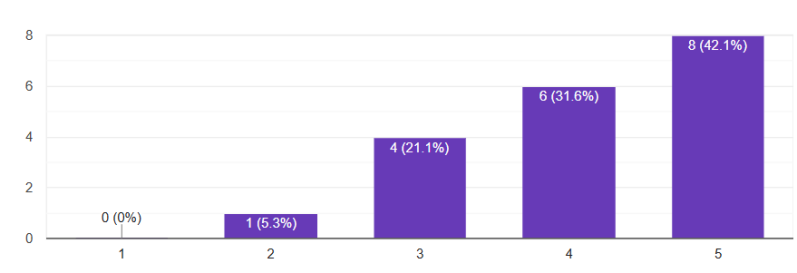

Students level of agreement on if writing tutors had provided them with quality feedback (1-strongly disagree, 5- strongly agree)

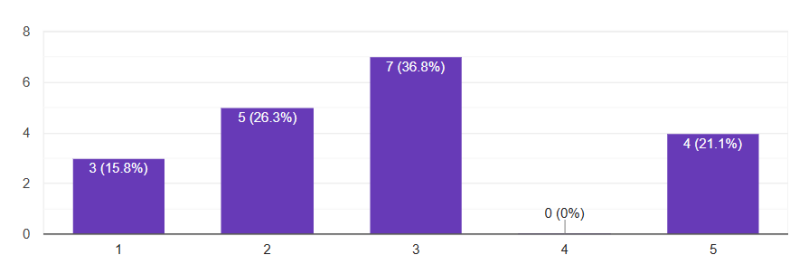

Students level of agreement on if AI had provided them with quality feedback

Students level of agreement that feedback from writing tutors led to an improvement in grades

Students level of agreement that AI feedback led to an improvement in grades

Opinion is one thing but results are another, so in the next question we asked students if using AI or Human tutors had resulted in an improvement in their improvement in their assignment grade.Without a formal experiment it is difficult to prove definitively the effectiveness of either form of tutoring, so this next question was crafted as a way to gather at least some quantifiable data on that end. Quantifiable and more importantly, noticeable improvement would give an idea of the level of effectiveness. Again human tutoring pulled ahead as more students agreed or strongly agreed that they had seen an improvement in their grade. AI on the other hand saw even lower with 50% of students disagreeing that AI had made any noticeable improvement grade wise.

Finally for the last section of the survey Students were asked if they would/ would continue to use either mode of tutoring and if they would recommend this mode of tutoring to their peers. Unsurprisingly, human writing tutors were by far the favored with 68.4% agreeing that they would either continue or start visiting a writing tutor. In addition an astounding 94.4 percent also agreed that they would recommend a writing tutor to assist their peers with their writing. One student wrote “Writing tutors understand what you say when you tell them what you need help with. They can critically think, and they use that to help you improve your work. You can also brainstorm with a tutor.” (Student participant #2) This showcases that desire for connection seen in earlier studies which may explain the overwhelming support seen in the survey so far. AI tools again fell behind with only 26.3% claiming that they would/ would continue to use AI as a tool for feedback in their writing. Even less (20.8%) said that they would recommend using AI regularly.

Students agreement in whether they would/would continue to use writing tutors

Student agreement in whether they would/would continue to use AI

Students level of agreement on whether they would suggest writing tutors to peers

Students' level of agreement on whether they would recommend AI tutors to peers.

So, despite over half of participants using AI tools, and using them regularly, the general feeling regarding AI still seems to lean towards being highly negative. Also, unlike Calvo & Ellis’s study which found perceptions of AI negative due to students' perception of feedback 84.2% of participants within this study viewed feedback as cohesive meaning they already understood the value feedback can bring to a paper and still found little value in AI feedback.

Limitations

Only 19 students were able to participate within the survey in part due to the approaching finals week and thanks to the short timeframe in which the survey window was open (approx. One week). There is also the factor to consider that because the survey was distributed in writing focused classes a majority of the students participating come from english centered disciplines like literature. Since 2023’s Hollywood writers strike, where thousands of writers went on strike partly due to issues regarding AI replacing human writers, there has been a strong dislike of AI within the writing/creative community. Compound this issue with the fact that AI has led to a rise in cheating on papers and other academic pursuits and you may have a strong case for the concept of “AI aversion” within students.

Finally, one major limitation with the results of the survey is, of the 19 students who participated 13 students in total used writing tutors and another 13 used AI, this means that in the second half of the survey testing student agreement, there should have been six students who stayed neutral across all questions due to not having experience with that mode of tutoring, however across most questions, especially the ones regarding AI, at least one student without experience with these modes of tutoring shared opinions on the quality of feedback, improvement in grades, and further recommending to peers. So, even though they may not have any experience with AI or human tutors they are still giving opinions which may be negatively impacting results.

Discussion

In terms of students preferences, the results of the survey reflected the results of previous studies examined with students showing strong preference for human writing tutors across all questions asked within the survey. One student even expressed “Writing tutors have been very useful to me, I feel the writing center at FIU should be more heavily integrated into courses in general.” (Student Participant #3). However, this is where the similarities between studies end. Despite students understanding the role of feedback within writing, a majority of students did not find feedback given by AI tools to be helpful in the improvement of their paper, nor did they see any significant improvement in their assignment grade. This level of disagreement or dislike of AI tools may be a sign of “AI aversion,” and as we stated before with the writers' strike, English majors may already have developed a strong dislike towards AI tools. We also must recognize that current public opinion in general regarding AI has taken a turn to the negative, especially among younger generations. The environmental impacts, issues with deep fake videos, and entry level positions becoming automated has all left a sour taste in the mouths of many.

However, to disregard the use of AI in education entirely may still be a disservice to students, but to begin implementing it in classrooms, at least right now, may not prove as successful as schools may think. The fact of the matter is, AI technology is being rapidly pushed and implemented into society as a sort of “fix all” solution for larger companies, but it still has many areas in which it must improve before some will consider it a proper tool to help in education. Collaboration between tutor and AI is very much possible but very few will support it unless these preexisting issues are dealt with and trust can once again be established.

Works Cited

Noble Lo, Alan Wong, Sumie Chan, The impact of generative AI on essay revisions and student engagement, Computers and Education Open, Volume 9, 2025, 100249, ISSN 2666-5573,

https://doi.org/10.1016/j.caeo.2025.100249.

Escalante, J., Pack, A. & Barrett, A. AI-generated feedback on writing: insights into efficacy and ENL student preference. Int J Educ Technol High Educ 20, 57 (2023). https://doi.org/10.1186/s41239-023-00425-2

Kashiha, H. “Rethinking Feedback Practices in Academic Writing through the Lens of ChatGPT-Generated and Instructor Feedback on the Same Set of Introduction Sections Written by L2 Students.” Journal of Second Language Writing, vol. 66, 2025, article 100979. ScienceDirect, https://doi.org/10.1016/j.jslw.2025.100979

Jacob Steiss, Tamara Tate, Steve Graham, Jazmin Cruz, Michael Hebert, Jiali Wang, Youngsun Moon, Waverly Tseng, Mark Warschauer, Carol Booth Olson, Comparing the quality of human and ChatGPT feedback of students’ writing, Learning and Instruction, Volume 91, 2024, https://doi.org/10.1016/j.learninstruc.2024.101894.

Kritish Pahi, Shiplu Hawlader, Eric Hicks, Alina Zaman, Vinhthuy Phan,

Enhancing active learning through collaboration between human teachers and generative AI, Computers and Education Open, Volume 6, 2024, https://doi.org/10.1016/j.caeo.2024.100183.

Escalante, J., Pack, A. & Barrett, A. AI-generated feedback on writing: insights into efficacy and ENL student preference. Int J Educ Technol High Educ 20, 57 (2023). https://doi.org/10.1186/s41239-023-00425-2

Calvo, Rafael A., and Robert A. Ellis. “Students’ Conceptions of Tutor and Automated Feedback in Professional Writing.” Journal of Engineering Education, vol. 99, no. 4, Oct. 2010, pp. 427–438. Wiley Online Library, https://doi.org/10.1002/j.2168-9830.2010.tb01072.x.

Le, Huixiao, et al. “Breaking Human Dominance: Investigating Learners' Preferences for Learning Feedback from Generative AI and Human Tutors.” British Journal of Educational Technology, first published 4 July 2025, https://doi.org/10.1111/bjet.13614.